The Social Life Of The Internet Of Things

Thursday, July 23, 2015 at 3:16PM

Thursday, July 23, 2015 at 3:16PM This idea became part of an awesome O'Reilly Foo Camp 2015 session. It's an evolving concept I call the Social Memes Of The Internet Of Things.

JOIN ME IN AUSTIN, TX FOR A SXSW 2016 #ROBOSTACHE brainstorming session!

Just like your human friends on Twitter, the interactions between machines in a social networking context will allow things that would never happen otherwise to happen.

Questions answered in this session:

-How can the things that make up the Internet Of Things become friends?

-How can Machine Learning, along with Social Networking signals from human users create, amplify and disperse new experiences in the IoT and beyond?

-What client experiences are enabled with a curated dynamic and extensible machine to machine social graph?

Smart Dancefloor Social IoT Use Case: Interview with Olin Hyde, CEO of Englue, an AI company employing Machine Learning technology

A successful plaform for Social IoT will enable anyone, not just programmers and engineers to build and share useful interactions between machines at will. One of the IoT social flow examples I came up with involves a camera that counts people, maybe for a nightclub, telling the staff how much space they've got at any given time, for code compliance/safety reasons.

Along with the People Counter camera, there is the "Shazammer", a little box that listens to songs playing in the space, creating a timeline of artist, genre, beat structure, etc. It also creates a stream of income as nearby patrons hear a song and hit it up for the artist link and are directed to an affiliate sale purchase.

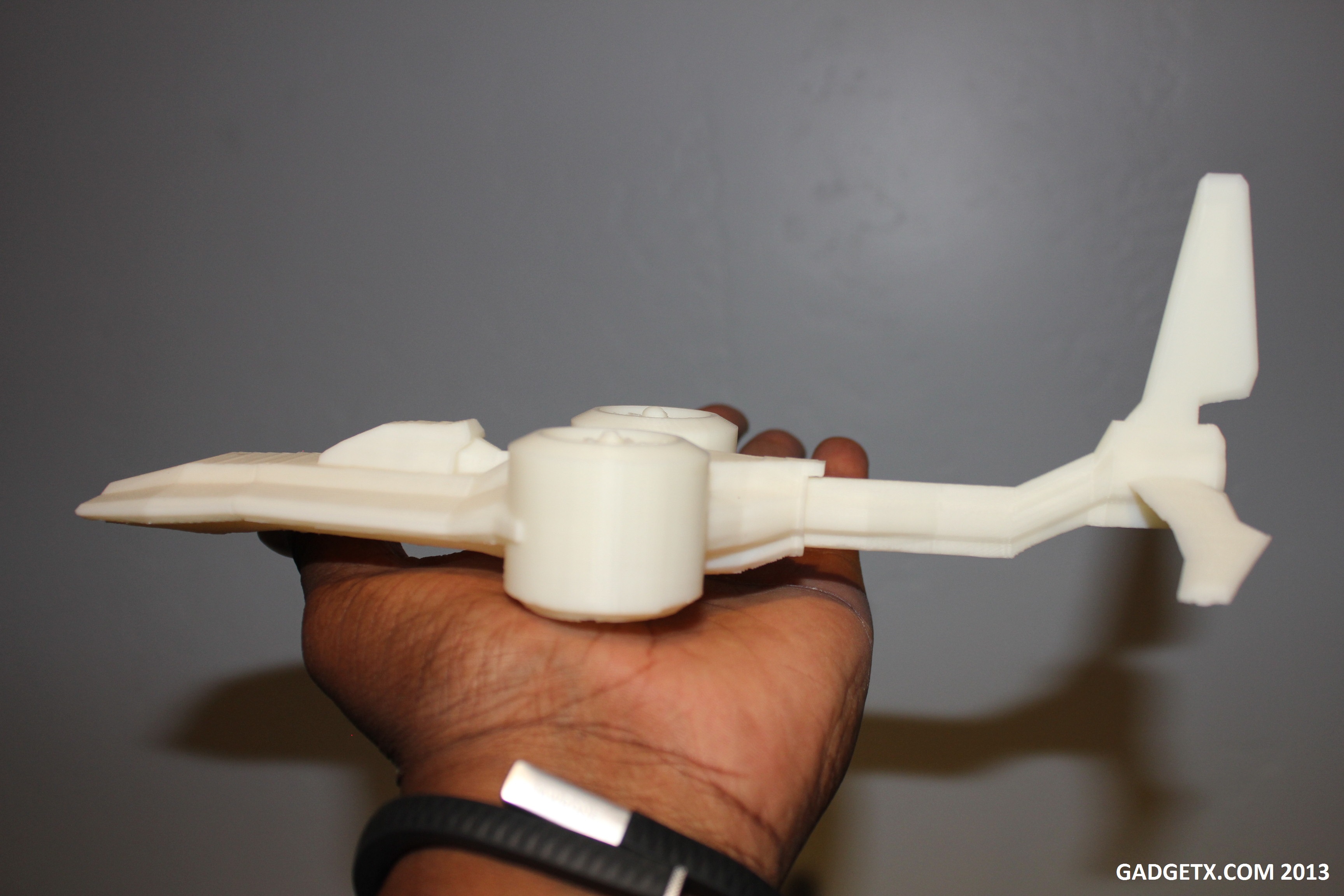

So both of these machines have day-jobs, their own reasons to be plugged in and active. They are also products of customized, on-demand manufacturing(see Nascent Objects below). Introducing the devices via social-networking-like friending could result in a Social IoT "Meme", a new mash-up service that uses the timeline output of each device to produce new and useful data. In this case, call it the #IsThisClubBumpin Meme, would correlate the music being played with how many folks are on the dance-floor. The result is piped into the timeline of the Meme, which could be available as a service to other Apps, like Google Maps for example. You might browse clubs on a map and notice small icons dancing about the satellite overhead view, synced to your music genre preferences, indicating a good time is being had by all within.

The Internet Of Things is about to get alot more interesting because of you and Nascent Objects: Interview with Baback Elmieh, founder and CEO.